What is robot.txt file? A SEO Guide for robots.txt

Robots.txt is a file that tells search engine crawlers or spiders which URLs they can access on websites. It limits access to the functions of web crawlers. With the help of this file, engine spiders know what certain pages are needed not to crawl. You can use the robots.txt file, especially for three reasons: blocking non-public pages, optimization of crawl budget, and preventing indexing of resources. Most search engines like Yahoo and Google recognize this file and honor it.

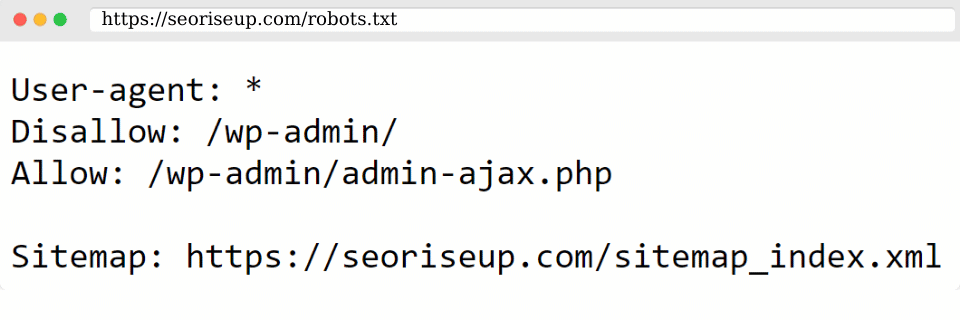

The location of your robots.txt is the root of your site.

Why is the robots.txt file important?

Most websites don’t need this file because Google can index all required pages and automatically doesn’t index pages that are not wanted. Having said that, there are three major reasons why to use the robots.txt file as a part of your routine SEO Audits.

1). You can block non-public pages. Usually, you don’t want some of your pages indexed. You want them to exist but don’t want people to land on them. So, in this case, you can use robots.txt to block some pages from search engine spiders and crawlers.

2). You can prevent the indexing of your resources. You can use meta directives to block some pages from indexing. But they don’t work well when it comes to multimedia resources such as images and PDFs.

3). You can maximize your crawl budget. If all of your pages are indexed, you can have a budget problem. If you block some pages from indexing, Googlebot can spend your budget on the pages that matter.

Robots.txt Examples

There are multiple choices when it comes to robots.txt and its examples. Here are ten templates that you can use for different needs:

1) You can disallow all pages from indexing. This will stop all bots from crawling your site. You can use it when if your site is not ready yet, if you don’t want the site to appear on Google search results, or if it is a staging website that is used to test changes.

2) You can allow Googlebot to crawl your entire website and web pages.

3) It is possible to block a file. There might be some specific files that you don’t want to be indexed on search results.

4)You can allow only Googlebot. It is possible to use robots.txt to add rules that apply only to specific bots.

5) On the other hand, you can disallow a specific bot. Then it is possible to prevent the bot from accessing.

6) Sometimes, you need to block an area of the site and allow access to the rest of it. So you can block folders using robots.txt.

7) You can block all files with a specific file extension in some cases when you don’t want Googlebot to waste time by reading your spreadsheets.

8) It gives access to your sitemap to list all your URLs on your website. It will be easy for bots to find the links on your site.

9) You can draw a robot to robots.txt. Site visitors can see it. This one is the funny part.

10) You can control the speed of the process, and the bot can slowly look at what’s on your page.

WordPress robots.txt

Modern websites contain many elements. WordPress allows you to install plugins that usually come with their own directories. When you build a WordPress website, it automatically sets up a virtual robots.txt. So you don’t need to add robots.txt for WordPress. Because search engines index all WordPress sites by default. To boost your SEO, it is good to add a robots.txt file to your root directory to disallow search engines to access specific areas of your WordPress website.

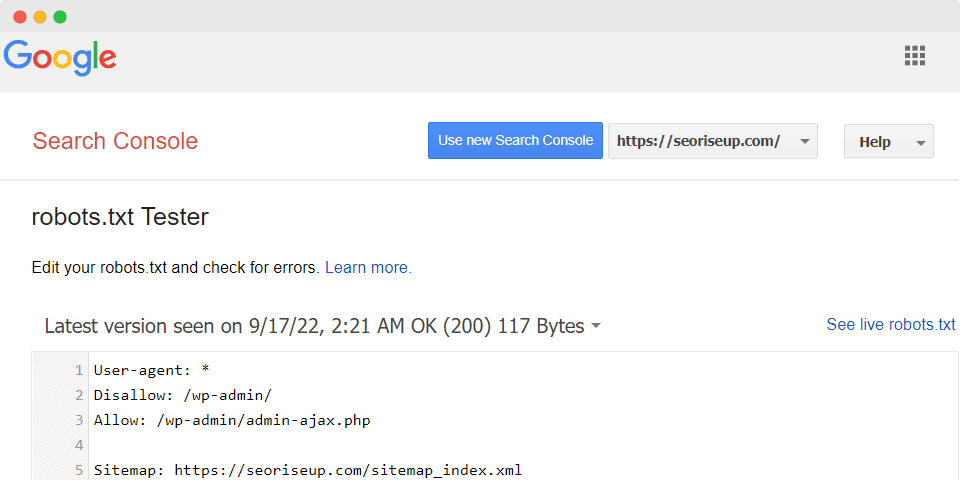

Robots.txt Testing Tool

Robots.txt testing tool shows whether your robots.txt file blocks web crawlers from URLs on your site. For example, this tool is used to test whether the Googlebot-Image crawler can crawl the URL of an image that you wish to block from Google Image Search. Here are the steps to follow to test your robots.txt file:

1. Open the tester tool and scroll through the robots.txt code to locate logic errors and the highlighted syntax warnings.

2. Type in the URL of a page at the bottom of the page.

3. Select the user-agent you want to simulate in the dropdown list.

4. Click the TEST button to test access and to Check to see if TEST button reads.

5. You can Edit the file on the page and retest it if necessary.

6. Copy your changes to your robots.txt file on your site.

This blog post of ours may be worthy of your attention: What is Search Engine Optimization?

Edit robots.txt file

The best way to edit robots.txt is by using WordPress SEO plugin. It allows you to take control over your website and configure a robots.txt file. Editing your robots.txt file is an advanced feature and may cause some issues. So your site’s page can have an improper listing. Either way, here is how to edit your robots.txt:

1. Go to your site dashboard.

2. Click Marketing & SEO.

3. Click SEO Tools.

4. Click Robots.txt Editor.

5. Click View File.

6. Write directives in the This is your current file: text box. Then add your robots.txt file info. Write the directives in the This is your current file: textbox.

Frequently Asked Questions

What is the use of the robots.txt file?

A robots.txt file tells search engine crawlers and bots what pages they can access on your website. This file can block specific pages that you don’t want to show in search results.

Does the robots.txt file help with SEO?

Yes, it is very important for search engine crawlers to find pages that need to be shown or not. You can use this file to block some pages to prevent showing them from the search results.

Conclusion

In this article, we explained the importance of robots.txt for SEO and for websites. It is important that web crawlers know which URLs they can access or not. There are main reasons why to use robots.txt: blocking non-public pages, maximization of crawl budget, and preventing indexing of resources. There are many examples of robots.txt, whether it is blocking all access or specific ones. If you have a WordPress website, it will automatically open a virtual robots.txt.